AI Agents in 2026: Why Most Enterprise Deployments Are Still Theater

Somewhere right now, a Fortune 500 CTO is presenting an AI agent demo to the board. The agent books meetings, summarizes contracts, and routes support tickets flawlessly. Six months later, it's quietly turned off because nobody could explain to the legal team what it actually did, or undo

Somewhere right now, a Fortune 500 CTO is presenting an AI agent demo to the board. The agent books meetings, summarizes contracts, and routes support tickets flawlessly. Six months later, it's quietly turned off because nobody could explain to the legal team what it actually did, or undo what it got wrong. Enterprise AI in 2026 has a pattern: impressive in the demo room, invisible on the income statement.

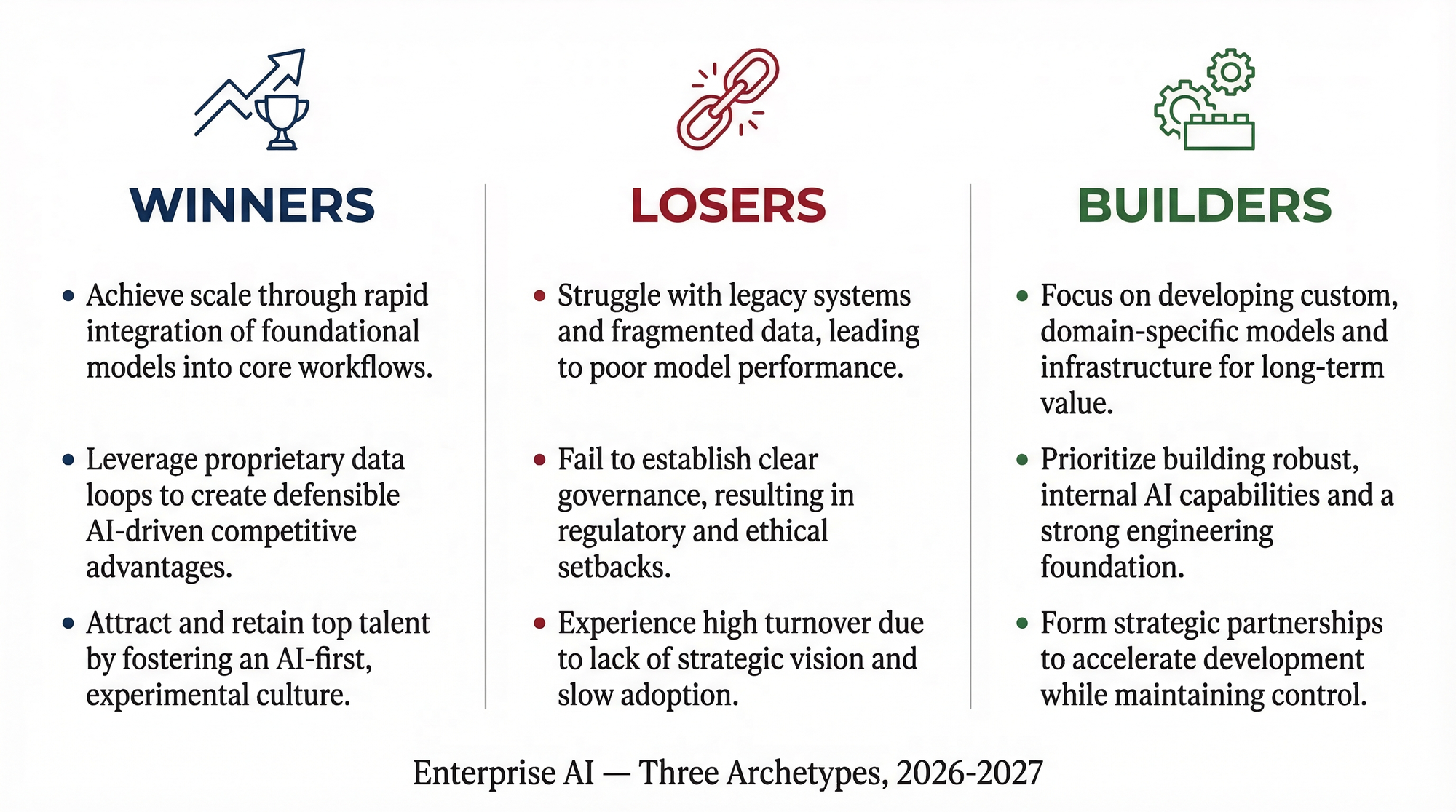

The enterprise AI agent market is drowning in capability theater: sophisticated systems that benchmark beautifully, ones that fail the boring operational requirements that actually matter in production. The winners won't be the most intelligent agent frameworks. They'll be the ones boring enough for a Fortune 500 legal team to approve.

The Demo Is Not the Product

You can spin up a multi-step autonomous agent workflow in an afternoon. Watching one run in a sandbox is genuinely impressive. The problem starts the moment you move it out of the sandbox.

In production, an agent touches real data, triggers real API calls, modifies real records. The failure mode that actually kills enterprise deployments isn't hallucination or task failure (those are visible and recoverable). The failure mode is the absence of auditability, rollback mechanisms, and compliance-grade logging. You don't discover that gap in the demo. You discover it six months later, in a meeting with your General Counsel.

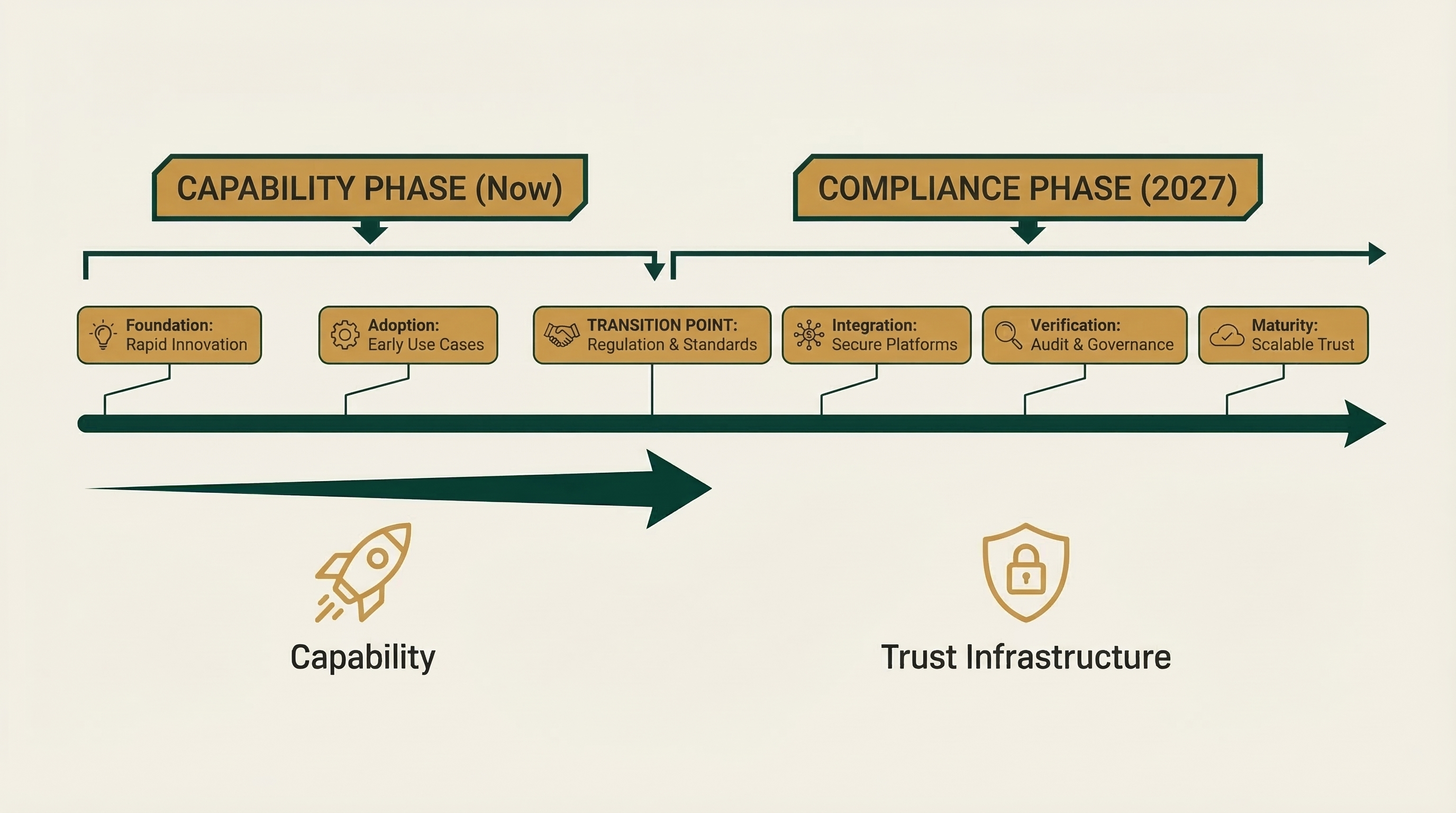

Enterprise procurement cycles run 6 to 18 months. Agent hype cycles run 3 to 6. These timelines don't sync up, and the result is a graveyard of POCs. In conversations with peers across regulated industries, the pattern is consistent: the majority of enterprise AI agent pilots launched in 2025 will never reach full production. Not because the AI failed. Because the operational infrastructure was never built. This isn't pessimism. It's what happens every time capability outpaces the enterprise readiness layer.

The tell is when the AI industry starts talking about partner ecosystems instead of benchmark scores. That language shift has already happened. The capability wars are over. The compliance wars have begun.

The Four Killers: What Actually Terminates Enterprise Agent Deployments

Every operator I speak to reports the same pattern. The agent works. Then it hits one of four walls.

Auditability failure. Most agent frameworks produce logs that tell you what happened, not why. In every regulated industry from finance to healthcare, "why" is the legal requirement. An agent that moved $2M in vendor payments cannot tell a SOX auditor "the model decided." That answer ends the deployment. It also opens the door to personal liability for the executives who approved it. In financial services, a single audit finding tied to an unexplainable automated decision can trigger regulatory scrutiny that costs far more than the efficiency the agent was supposed to deliver.

Rollback impossibility. Agents that take real-world actions: sending emails, modifying records, triggering payments. Those consequences can't be unwound. Without a deliberate mechanism to reverse actions, one bad reasoning chain spreads irreversible changes across connected systems. Fast. The remediation cost of a single unchecked agent error in a customer-facing workflow (refunds, legal exposure, reputational damage) can easily exceed the annual budget allocated to the entire AI initiative.

Compliance opacity. GDPR, HIPAA, CCPA, and the EU AI Act's high-risk system requirements demand explainability and data lineage. Most current agent architectures treat these as afterthoughts. In healthcare, a HIPAA violation tied to an AI agent's undocumented data handling carries fines up to $1.9M per violation category. That's before class action exposure. The compliance review is what kills the timeline, not the technical integration.

Impact Scope Uncertainty. Every IT security team needs to answer one question before approving a new system: what is the worst thing this can do if it misbehaves? Agents with broad system access and no defined permission boundaries cannot answer that question. They fail the security review on principle. Every time. In government and regulated financial institutions, an agent that can't demonstrate a hard ceiling on its own actions will never clear procurement, regardless of its capabilities.

Enterprise Agent Risk Diagnostic

Identify your organization's AI compliance exposure in 60 seconds.

will never reach production.

Which of the Four Killers threatens your deployment?

Most failures don't come from weak AI. They come from four operational gaps that surface in legal reviews, compliance audits, and security sign-offs.

- Auditability Failure

- Rollback Impossibility

- Compliance Opacity

- Impact Scope Uncertainty

The Reliability Stack Nobody Is Building

What enterprise agents actually need isn't smarter AI. It's the same boring infrastructure we demanded from every other mission-critical system before we trusted it with real decisions.

Full records of what the agent did and why, in language an auditor can read. Human checkpoints before the agent touches anything that moves money or changes a customer record. The ability to undo (not "contact support to reverse this" but actual rollback). And integration with the same access controls that govern every other system in your environment.

None of this is complicated to describe. Almost none of it exists yet in the products your vendors are demoing.

The parallel to cloud is exact. AWS launched in 2006. Enterprise adoption didn't scale until audit logging, compliance certifications, and granular access controls arrived between 2010 and 2014. The capability was there from day one. The trust infrastructure took eight years. Agent infrastructure is in the 2007 phase: capable, pre-compliance, and being sold to enterprises as if it's 2014. Sound familiar? The demo was never the product. The audit trail is.

The industries most exposed right now are financial services, healthcare, and government, precisely because they operate under the heaviest compliance obligations and have the least tolerance for "we'll add governance later." Early warning signs that your own organization is in trouble: your AI vendor can't name the specific regulation their logging architecture addresses; your pilot has no defined human approval step before the agent executes financial or customer-record changes; and nobody in the room can answer "how do we turn this off and reverse what it did."

Who to Buy From, Who to Wait On, What to Do Now

Buy with confidence from vertical AI vendors who built compliance architecture before they built features. These vendors can hand your legal team a data lineage diagram on the first call, not the fifth. Think industry-specific deployments where the vendor has already absorbed the regulatory requirements of your sector. They compete on auditability, not benchmark scores.

Put on hold any horizontal agent platform that leads with capability and treats governance as a roadmap item. The answer to "how does your legal team approve this?" should not be "great question, we're working on it." It used to be the CTO. Now it's the CISO and the General Counsel, and your vendor's pitch deck needs to reflect that reality.

Watch the infrastructure category closely. The company that builds the observability and access-control layer for enterprise agents, the equivalent of what Datadog and Okta built for cloud infrastructure, will be a multi-billion dollar outcome. That company does not yet exist in recognizable form. When it emerges, it will win enterprise AI distribution faster than any model provider.

By 2027, the leading enterprise agent platforms will be differentiated not by model quality (that will be commoditized) but by how deeply their audit trail integrates with your existing compliance infrastructure. The EU AI Act's enforcement timeline will accelerate this for any company with European operations. A high-profile agent failure in financial services or healthcare before Q1 2027 will accelerate it everywhere else.

What to Do This Quarter

These are not strategic initiatives. They are this quarter's actions.

Ask your current AI vendors one question: "Show me the complete record of what your agent did in our last pilot: not just what actions it took, but the reasoning behind each decision, in a format my auditors can read." The answer will tell you everything about whether this vendor is ready for your production environment.

Ask your General Counsel to review your current AI pilot agreements for indemnification language. Specifically: who bears liability if an agent makes a financially material error or triggers a regulatory violation? Most current contracts are silent on this. That silence is a risk you are currently holding without knowing it.

Have a budget conversation with your CISO about agent permission architecture before the next pilot launches. Not after. The question isn't "is this AI secure?" It's "what is the maximum failure scope if this agent misbehaves, and is that acceptable?" If your CISO hasn't been in the room for your AI vendor evaluations, that is the governance gap most likely to produce a painful headline.

Structure your next AI vendor meeting differently. Give the last 20 minutes to your General Counsel and CISO, not your CTO. Ask the vendor to walk through a scenario where the agent makes a mistake (not a technical failure, but a wrong decision) and explain exactly how you find it, stop it, reverse it, and document it for regulators. Vendors who have built for production will welcome this conversation. Vendors who haven't will change the subject.

Capability without governability is a demo, not a product. The organizations that internalize this before their first high-profile failure will have a durable advantage over those that learn it the hard way. The infrastructure to govern enterprise agents responsibly is not yet mature. Your job this quarter is to make sure you're not the case study that proves why it needed to exist.

> Key Takeaway: Enterprise AI agent deployments are failing not because the AI is underperforming, but because the operational infrastructure was never built: no audit trails, no human checkpoints, no ability to reverse actions. The executives who demand these requirements before a pilot launches, not after, will be the ones who actually show AI on their income statement.